How AI Is Reshaping Cybersecurity for Businesses of Every Size

Until early April 2026, the standard line in any AI-and-cybersecurity briefing was that nobody yet knew whether AI helped the attacker or the defender more. That sentence is now dated.

On April 7, Anthropic introduced Claude Mythos Preview, a frontier model the company itself decided not to ship publicly. The reason was unusual: the model was too capable at finding zero-day vulnerabilities. In Anthropic’s own internal testing, Mythos surfaced flaws in every major operating system and web browser, including a 27-year-old remote crash in OpenBSD and a 17-year-old root exploit in FreeBSD now triaged as CVE-2026-4747. On the same vulnerability-discovery benchmark where the previous flagship model produced almost zero working exploits, Mythos produced 181.

For business leaders, the salient detail isn’t the headline number. It’s that the capability jump didn’t come from offensive-security training. It came from general improvements to code reasoning, which means every frontier model that follows is on the same trajectory. Former U.S. national cyber director Kemba Walden told Fortune the day after the announcement that Mythos-class tooling could “hack nearly anything,” and that the country’s critical infrastructure was not ready.

From AI-assisted attacks to AI-driven attacks

That phrasing is not ours. It comes from Palo Alto Networks’ “Defender’s Guide to the Frontier AI Impact on Cybersecurity,” published April 2026, which the company built after testing Mythos through Anthropic’s Project Glasswing partnership and OpenAI’s Trusted Access for Cyber program. The team’s blunt finding: a frontier model now performs the equivalent of a full year of human penetration testing in roughly three weeks, and is “exceptionally effective” at chaining lower-severity issues into critical exploit paths.

Translated to risk language, the window between a vulnerability shipping and a working exploit existing has gone from weeks-or-months down to hours. Software that was technically vulnerable but practically safe — because no human researcher had bothered to look — is no longer practically safe.

Palo Alto’s 2026 predictions push the implications further. The firm forecasts that identity will become the primary battleground as AI-generated “CEO doppelgängers” make voice and video forgery indistinguishable from the real thing, magnified by an 82 : 1 machine-to-human identity ratio inside the average enterprise. And it warns that with only 6% of organizations reporting an “advanced” AI security strategy, the gap between adoption and oversight will produce the first executive-liability lawsuits over rogue AI agents.

The threat side has industrialized too

Fortinet’s 2026 Cyberthreat Predictions, which FortiGuard Labs has been updating into Q2, describes an offense ecosystem that no longer looks improvised. The firm’s analysts call it “the industrialization of cybercrime”: agentic AI swarms coordinating tasks semi-autonomously, specialized AI agents purpose-built for credential theft, lateral movement and data monetization, and supply-chain attacks tuned for AI and embedded systems.

The proof is already on the wire. A field campaign first reported in February 2026 saw an AI-assisted threat actor compromise more than 600 FortiGate devices across 55 countries in roughly five weeks. Investigators traced the speed and breadth to AI-generated scanning and exploitation pipelines that no equivalent-sized human team could match.

In Fortinet’s framing, defenders’ counter is “machine-speed defense” — a continuous loop of intelligence, validation and containment that compresses detection-and-response from hours to minutes. The same report notes that only 18% of organizations have AI-driven threat detection fully operational across their cloud environments; another 32% are still in pilot. The technology exists. Most teams have not turned it on.

What this looks like at small and mid-sized businesses

A 25-person law firm is not personally interesting to the operator of a Mythos-class scanner. But the firm’s practice-management software, its email vendor, and its bookkeeping platform absolutely are — and that’s where the disproportionate risk now sits.

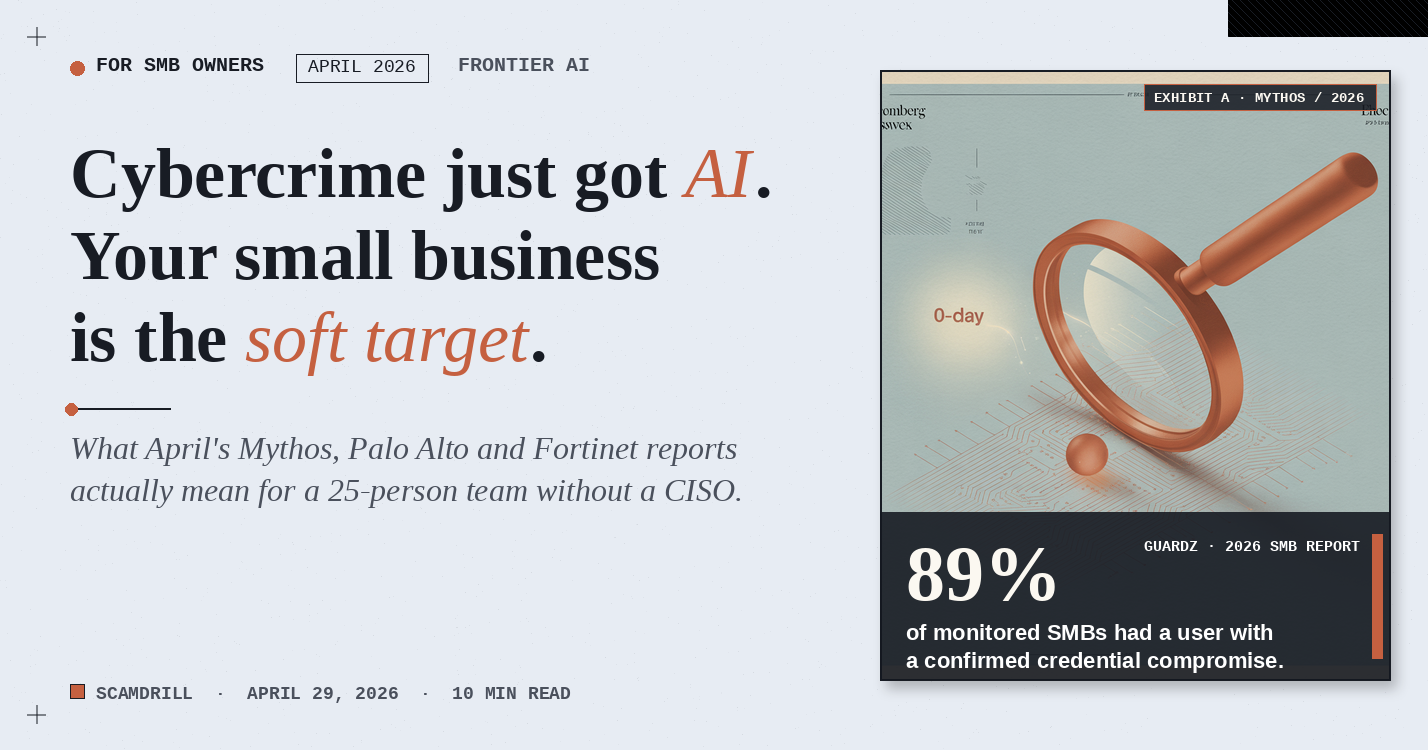

A January 2026 Guardz report on the SMB segment found that 89% of monitored small businesses had at least one user with confirmed credential compromise at any given moment, with nearly a third of users (31%) exposed to a compromised password monthly. Abuse of remote-monitoring-and-management tools — the kind an MSP relies on — accounted for 26% of all endpoint detections. Identity is the loose thread, and AI-driven attackers are pulling on it across thousands of SMBs in parallel.

The front door is still where they walk in

It’s worth saying out loud: while Mythos-class scanners are getting the headlines, the front door is still where most attackers actually walk in. Phishing, pretexting and business email compromise account for the overwhelming majority of breaches that cost SMBs real money. Verizon’s breach data has put the share of incidents involving a deceived person at roughly 68% for years running, and the FBI’s 2024 IC3 report logged $2.77 billion in BEC losses alone. Frontier AI hasn’t replaced that pattern. It has amplified it.

Spear-phishing emails now mirror your tone, your invoice templates and your client list because an LLM did the cleanup. Deepfake voice calls land on bookkeepers who had no warm-up. AI-generated “CEO doppelgängers” (Palo Alto’s phrase) push wire approvals through video calls. The exotic exploit chain is a real risk. It isn’t the most common one. The most common one is still a 9 a.m. email that looks exactly like the one your bookkeeper was expecting — and a team that has never been trained to spot the tell.

That’s precisely the gap ScamDrill exists to close. We cover the front-door tactics in depth in our social-engineering targeting SMBs guide and the AI voice-cloning playbook; the rest of this post stays focused on the back door.

What’s changed for the SMB defender is mostly throughput. Three years ago, a phishing email tailored to your CFO with the exact phrasing your bookkeeper uses was rare because it took an attacker an hour. Today, that hour costs around twenty cents of inference time. The defenses you’d have written down in 2023 are still right. The cost of failing to apply them has gone up.

Five things to do before next quarter — regardless of headcount

These are the controls that cut the most risk per dollar in light of what Mythos, the Palo Alto guide, and the Fortinet forecast actually changed.

- Patch faster, and pre-commit to it. Mythos-class tooling collapses the window between disclosure and weaponization. A documented 72-hour SLA for critical patches, with named owners and a quarterly audit, is the single highest-leverage policy you can write this week.

- Phishing-resistant MFA on every account that touches money or email. SMS and app-push MFA fall to off-the-shelf adversary-in-the-middle kits; passkeys and FIDO2 hardware keys do not. The cost is roughly $30 per user.

- Out-of-band callback for any payment change. Voice clones beat almost every other identity check. A pre-verified phone number plus a code word does not.

- Run continuous, simulated phishing. Annual training is simply not enough. Monthly simulations cut click-through 70–90% within a year and keep AI-tailored lures in front of the team that has to recognize them.

- Inventory and constrain agentic AI access. Every connector you give an internal chatbot is a credential a future attacker will try to reuse. Treat AI agents as identities — provision, audit and revoke them like employees.

Three quiet shifts to watch

- Vulnerability disclosure timelines compress. Vendors will start announcing fixes before public disclosures because exploit code is appearing same-day.

- MSPs and SaaS vendors become the highest-value target. One compromised RMM tool yields hundreds of customer environments.

- “Did a person actually do this?” becomes a routine question. Wire approvals, password resets and vendor changes increasingly need a non-AI-falsifiable second factor.

For the operations side, Palo Alto’s Unit 42 launched a Frontier AI Defense practice in April aimed specifically at evaluating and hardening AI agents that are already deployed inside customer environments. The team also published a CISO checklist that doubles as a usable starting policy for organizations that don’t have a CISO.

Practice still beats policy.

ScamDrill’s organization plans send realistic, current-threat phishing simulations to your team and turn every “oops” into a 60-second teaching moment. When an AI-tailored attack lands, it lands on a trained inbox.

See the organization plan →The honest read

Mythos won’t be the last model of its kind. Anthropic is releasing it carefully — Project Glasswing comes with up to $100 million in usage credits earmarked for partners hardening foundational software, plus $4 million in donations to open-source security work. That’s real, and it buys defenders some lead time. But every comparable lab is on a similar curve, and not all of them will choose to gate access. The right planning assumption is that within 18 months, a near-Mythos capability is in the hands of a moderately funded threat actor.

The shape of cyber-defense for businesses of every size now narrows to one job: keep the human attack surface small, the patching cadence honest, and the identity story clean. The work isn’t new. The pace is.

For deeper SMB-specific rollouts, see our small-business phishing-training program, the 30-day simulation rollout plan, and our social-engineering tactics overview.